AMD Instinct MI325X - 256GB CDNA3 for Inference

AMD Instinct MI325X specs, benchmarks, and analysis. 256GB HBM3e at 6 TB/s, 2.6 PFLOPS FP8, CDNA3 architecture - the memory-capacity upgrade to the MI300X targeting large model inference.

Overview

The AMD Instinct MI325X is a CDNA3-architecture AI accelerator that launched in October 2024, shipping in volume through Q1 2025 across Dell, HPE, Supermicro, and Lenovo platforms. It's best understood as an evolution of the MI300X rather than a new generation: same CDNA3 chiplet architecture, same compute units, meaningfully upgraded memory - 256GB of HBM3e versus the MI300X's 192GB, with memory bandwidth rising from 5.3 TB/s to 6 TB/s.

TL;DR

- CDNA3 GPU with 256GB HBM3e (33% more than MI300X) and 6 TB/s bandwidth (13% gain)

- 2.6 PFLOPS FP8 on the same 304 compute units as MI300X - compute unchanged from the predecessor

- Comes within 3-7% of H200 on MLPerf v5.0 Llama2 70B, pulls ahead at high batch sizes

- Cloud rental at $2.00-$2.25/hr; now overshadowed by MI350X on the roadmap

For operators running 70B+ parameter models or Mixture-of-Experts architectures with large KV caches, the 256GB capacity matters: it lets a single card hold substantially larger context windows or heavier model slices without swapping to host memory. AMD positioned the MI325X directly against the NVIDIA H200, which carries 141GB of HBM3e, in the inference market.

The chip fits into the same OAM (Open Accelerator Module) form factor as the MI300X, making it a drop-in upgrade for existing MI300X platforms when paired with compatible system firmware.

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | AMD |

| Product Family | Instinct MI300 Series |

| Architecture | CDNA3 |

| Process Node | TSMC 5nm (compute) + 6nm (IO) |

| Memory | 256GB HBM3e |

| Memory Bandwidth | 6,000 GB/s (6 TB/s) |

| FP8 Performance | 2,614.9 TFLOPS |

| FP8 with Sparsity | 5,229.8 TFLOPS |

| FP16 / BF16 | 1,307.4 TFLOPS |

| TF32 | 653.7 TFLOPS |

| FP32 | 163.4 TFLOPS |

| TDP | 1,000W |

| Peak Engine Clock | 2,100 MHz |

| Compute Units | 304 |

| Stream Processors | 19,456 |

| Matrix Cores | 1,216 |

| Form Factor | OAM (Open Accelerator Module) |

| Release Date | October 2024 |

Performance Benchmarks

AMD submitted MI325X to MLPerf Inference v5.0 (April 2025), its first formal MLPerf participation for this chip. Results on Llama2 70B show an eight-GPU MI325X system running within 3-7% of a comparably configured H200 system. Image generation tasks also landed within 10% of H200.

| Benchmark | MI325X (8x GPU) | H200 (8x GPU) | MI300X (8x GPU) |

|---|---|---|---|

| MLPerf v5.0 Llama2 70B (offline) | ~97% of H200 | Baseline | ~82% of H200 (est.) |

| MLPerf v5.0 image gen | ~90% of H200 | Baseline | ~78% of H200 (est.) |

| Memory Capacity | 256GB | 141GB | 192GB |

| Memory Bandwidth | 6 TB/s | 4.8 TB/s | 5.3 TB/s |

| FP8 TFLOPS | 2,615 | 1,979 | 2,610 |

On memory bandwidth, the MI325X actually leads the H200 at 6 TB/s versus 4.8 TB/s. AMD claims 40% higher throughput and 20-40% lower latency compared to H200 on Mixtral and Llama 3.1 in its own benchmarking. The MLPerf results don't reproduce that gap at standard batch sizes, but AMD's advantage does appear at higher concurrency and larger batch configurations where memory bandwidth becomes the binding constraint.

The compute delta between MI325X and MI300X is essentially zero at the same workload - both deliver ~2,615 TFLOPS FP8. The upgrade is entirely in memory capacity and bandwidth.

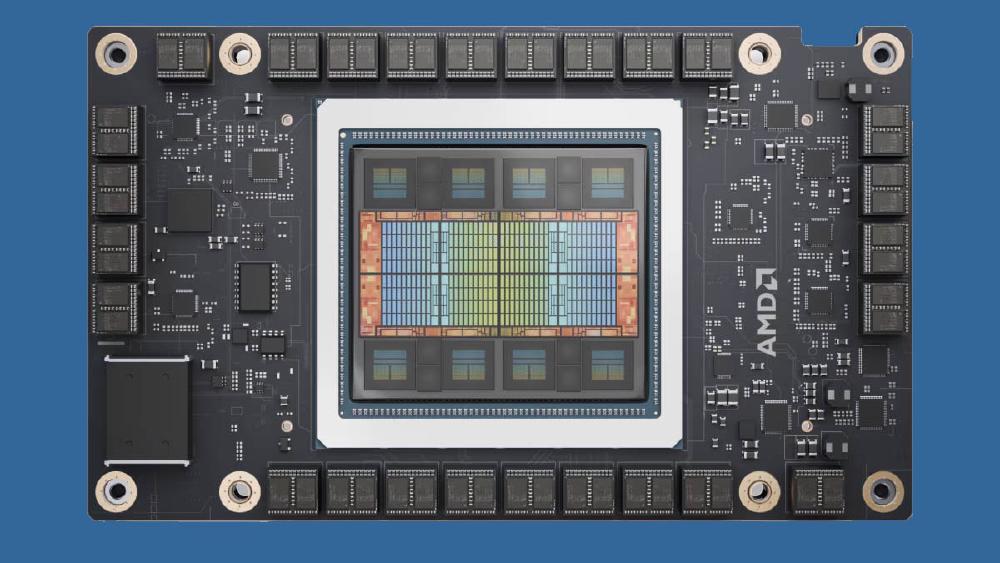

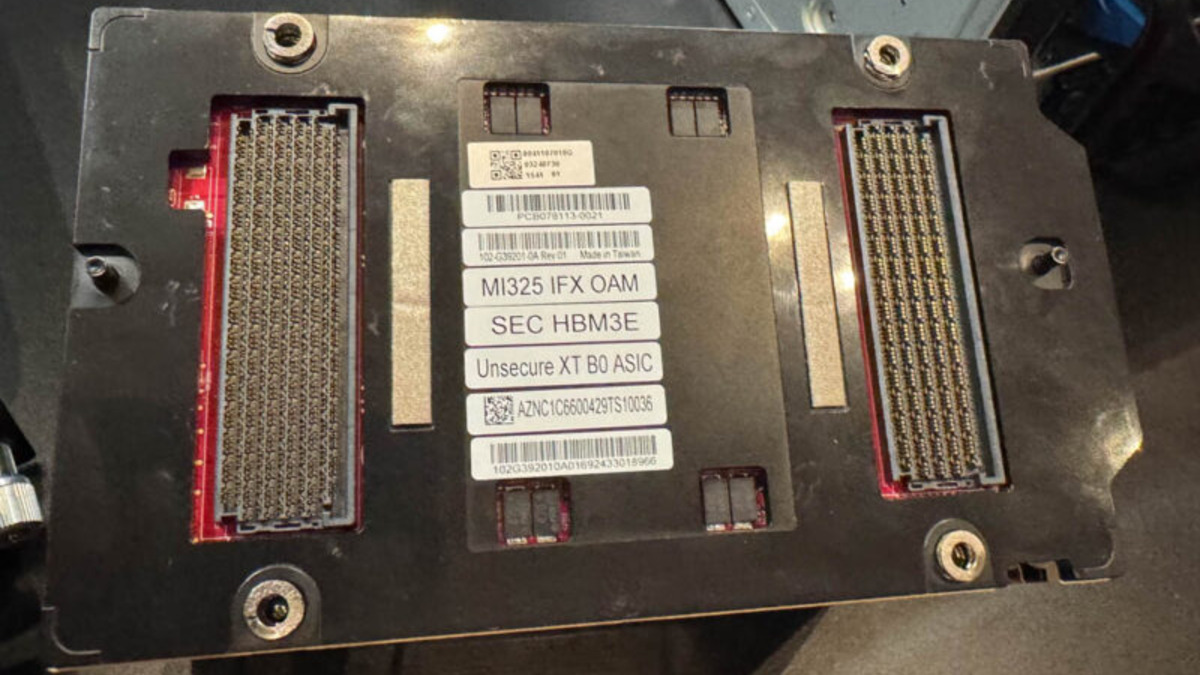

AMD Instinct MI325X in OAM form factor, with eight HBM3e stacks providing 256GB at 6 TB/s.

Source: servethehome.com

AMD Instinct MI325X in OAM form factor, with eight HBM3e stacks providing 256GB at 6 TB/s.

Source: servethehome.com

Key Capabilities

Memory Capacity as Differentiation

At 256GB per card, the MI325X holds more memory than any competitor shipping at volume in 2024-2025. The NVIDIA H200 offers 141GB, and the B200 tops out at 192GB. For inference workloads running Mixtral 8x22B, Llama 3.1 405B at reduced precision, or retrieval-augmented systems with large KV caches, fitting more model into fewer cards directly reduces inter-card communication overhead.

The tradeoff is power: 1,000W TDP places the MI325X in the same league as the NVIDIA B200 (1,000W) but well above the H200 (700W). Rack-level power budget implications are real when multiplying across 8-GPU nodes.

CDNA3 Architecture and ROCm

The MI325X uses the same CDNA3 chiplet design as the MI300X: multiple compute dies on a common substrate, unified memory across all chiplets, and a flat memory address space visible to the entire chip. The CDNA3 architecture introduced native FP8 matrix operations for the first time in AMD's Instinct line, which is why the FP8 and FP8-with-sparsity numbers matter - they reflect the same hardware tensor cores that run on NVIDIA Hopper chips.

Software support runs through ROCm, AMD's open-source GPU compute platform. The MI325X works with PyTorch, JAX, and vLLM through the HIP runtime. Software compatibility has improved substantially since the MI300X shipped, with ROCm 6.x adding better support for attention kernels and flash attention variants. It's still behind CUDA's ecosystem depth, but the gap has narrowed.

Comparison to MI300X

The MI325X is not a separate generation. It uses the same chiplet configuration as the MI300X with a higher-density HBM3e stack upgrade. This means:

- Existing MI300X system designs can accommodate MI325X with firmware updates

- No new compiler or kernel work required for MI325X vs MI300X

- The performance per watt actually worsens slightly (1,000W vs the MI300X's 750W for roughly the same compute)

The memory upgrade is the sole reason to choose MI325X over MI300X.

Pricing and Availability

Cloud providers including Vultr list MI325X instances, with per-GPU rental running approximately $2.00-$2.25/hr as of early 2026. That places it at roughly the same price as the MI300X, which had dropped from earlier premium pricing. The MI350X (CDNA4, 288GB HBM3e) is now entering market, and the MI325X's cloud availability is patchy as providers begin transitioning their GPU fleets.

No major hyperscaler - AWS, Google Cloud, or Azure - has announced dedicated MI325X VM SKUs. AMD's datacenter GPU presence in large cloud environments remains limited to on-premises OEM deployments through system integrators. Organizations rolling out at scale typically buy through HPE, Dell, Lenovo, Supermicro, or Gigabyte platforms.

List pricing for a 8-GPU system hasn't been formally published by AMD. ServeTheHome noted that the MI325X OAM module fits into existing MI300X-compatible baseplates.

Strengths and Weaknesses

Strengths

- 256GB HBM3e - more capacity than any competitor at the same generation point

- 6 TB/s bandwidth outpaces NVIDIA H200's 4.8 TB/s, benefiting memory-bound inference

- Drop-in upgrade path for MI300X-compatible OAM platforms

- Within 3-7% of H200 on MLPerf at standard batch sizes, ahead at high concurrency

- ROCm software ecosystem continues maturing with better kernel coverage

Weaknesses

- 1,000W TDP (vs H200's 700W) increases rack power costs proportionally

- Compute unchanged vs MI300X - the upgrade is memory only

- No hyperscaler cloud availability - limited to on-premises OEM deployments

- Already being superseded by MI350X (CDNA4) which adds substantially more performance

- CUDA software ecosystem depth still passes ROCm for custom kernel development

Related Coverage

- AMD Instinct MI300X - Predecessor chip with identical compute, less memory

- AMD Instinct MI350X - Next generation CDNA4 chip with major performance leap

- NVIDIA H200 - Primary competitor the MI325X was designed to challenge

Sources

✓ Last verified April 1, 2026