AMD Helios: 72-GPU Rack for AI at Scale

AMD Helios packs 72 Instinct MI455X GPUs and 31 TB HBM4 into a single rack delivering 2.9 FP4 ExaFLOPS for AI workloads.

TL;DR

- 72 AMD Instinct MI455X GPUs in a single double-wide rack - 31 TB of HBM4 and 1.4 PB/s of aggregate memory bandwidth

- 2.9 FP4 ExaFLOPS at the rack level; 1.4 ExaFLOPS FP8 - roughly 2x the FP4 compute of the GB300 NVL72 on paper

- 18 compute trays, each holding 4 MI455X GPUs and 1 EPYC Venice CPU, in a design based on Meta's 2025 OCP rack spec

- Scale-out networking uses 43 TB/s Ethernet - open standard, not proprietary; the ROCm software ecosystem is still the biggest wildcard

- H2 2026 availability; Oracle committed to 50,000 GPUs in Helios racks; pricing not announced

Overview

AMD Helios is a rack-scale AI platform, not a GPU. AMD announced it at CES 2026 with the Instinct MI455X accelerator that powers it, and the two products are inseparable. You don't buy a Helios rack and choose your GPU - the MI455X is the only option, and the Helios mechanical design exists specifically to house 72 of them.

The system puts 72 MI455X GPUs and 18 EPYC Venice CPUs into a double-wide rack weighing roughly 7,000 pounds, liquid-cooled all through. Those 72 GPUs deliver 2.9 FP4 ExaFLOPS and hold 31 TB of HBM4 across their 432 GB per-chip allotments. For comparison, the NVIDIA GB300 NVL72 delivers roughly 1.44 ExaFLOPS FP4 (dense) across 72 B300 GPUs with 20.7 TB of HBM3e total. On raw specs, AMD's rack-level numbers look strong. Whether they hold up in real workloads is a different question - one that won't be answered until hardware ships in the second half of 2026.

AMD built Helios on Meta's 2025 OCP (Open Compute Project) rack specification. The OCP foundation matters for data center operators: it means Helios fits into the same infrastructure that major hyperscalers have already built around OCP standards, rather than requiring proprietary rack designs. HPE is the rack architecture partner; Celestica handles manufacturing. Oracle committed to a 50,000-GPU deployment - the first large-scale Helios commitment confirmed publicly.

The compute tray design is a departure from typical GPU server architecture. Each of the 18 trays houses 4 MI455X GPUs with a single EPYC Venice (Zen 6) CPU. This tight CPU-GPU pairing per tray means CPU resources are distributed close to the compute - a different approach from the GB300's model of 36 Grace CPUs paired with 72 B200s across the entire rack. AMD hasn't disclosed exactly how inter-tray communication and CPU memory coherency work at the rack level.

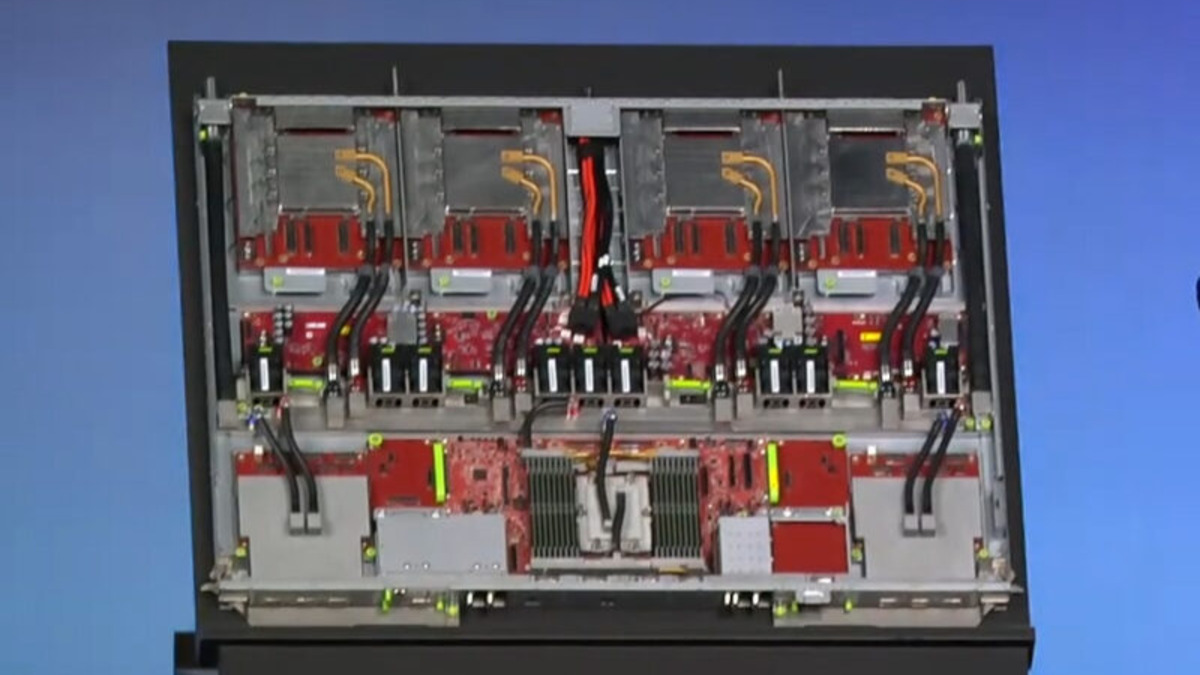

One of Helios's 18 compute trays, shown at CES 2026. Each tray integrates four MI455X accelerators with one EPYC Venice CPU.

Source: servethehome.com

One of Helios's 18 compute trays, shown at CES 2026. Each tray integrates four MI455X accelerators with one EPYC Venice CPU.

Source: servethehome.com

Key Specifications

Rack-Level Specifications

| Specification | Value |

|---|---|

| Manufacturer | AMD |

| System Type | Rack-scale AI platform |

| GPU Accelerators | 72 x Instinct MI455X |

| CPUs | 18 x EPYC Venice (Zen 6) |

| Compute Trays | 18 (4 x MI455X + 1 x EPYC Venice each) |

| Total HBM4 Memory | 31 TB (72 x 432 GB) |

| Aggregate Memory Bandwidth | 1.4 PB/s |

| FP4 Performance | 2.9 ExaFLOPS (inference) |

| FP8 Performance | 1.4 ExaFLOPS (training) |

| Scale-Up Interconnect | 260 TB/s |

| Scale-Out Networking | 43 TB/s Ethernet |

| Rack Design Basis | Meta 2025 OCP specification |

| Rack Partners | HPE (architecture), Celestica (manufacturing) |

| Weight | ~7,000 lbs |

| Form Factor | Double-wide rack |

| Cooling | Liquid cooled |

| Availability | H2 2026 |

| Pricing | Not announced |

Per-GPU MI455X Specifications

| Specification | Value |

|---|---|

| Architecture | CDNA 4 |

| Process Node | TSMC 2nm (compute) + TSMC 3nm (IO) |

| Transistors | 320 billion |

| HBM4 Capacity | 432 GB (12 x 36 GB stacks) |

| Memory Bandwidth | ~19.4 TB/s |

| FP4 Performance | 40 PFLOPS |

| FP8 Performance | ~19.4 PFLOPS |

| Scale-Up Bandwidth | 3.6 TB/s |

| Scale-Out Bandwidth | 300 GB/s (Ultra Ethernet) |

| TDP | Not disclosed |

Performance vs NVIDIA Rack Systems

No independent benchmarks exist for Helios. The system hasn't shipped, and AMD hasn't opened the hardware to third-party testers. Every number in this section is AMD-sourced, and that's worth treating with appropriate skepticism.

AMD's rack-level FP4 claim - 2.9 ExaFLOPS - compares against specific values for NVIDIA's current rack offerings. The GB300 NVL72 delivers roughly 1.44 ExaFLOPS FP4 (dense) across 72 B300 GPUs. The Vera Rubin NVL144, which also targets H2 2026, is projected at 3.6 ExaFLOPS FP4 across 144 R200 GPUs.

| Metric | AMD Helios | NVIDIA GB300 NVL72 | NVIDIA Vera Rubin NVL144 |

|---|---|---|---|

| GPUs per Rack | 72 MI455X | 72 B300 | 144 R200 |

| Total GPU Memory | 31 TB HBM4 | 20.7 TB HBM3e | ~20 TB HBM4 |

| Memory Bandwidth | 1.4 PB/s | ~576 TB/s | Not disclosed |

| FP4 Performance | 2.9 ExaFLOPS | ~1.44 ExaFLOPS | 3.6 ExaFLOPS |

| FP8 Performance | 1.4 ExaFLOPS | ~720 PFLOPS | ~1.2 ExaFLOPS |

| Scale-Up Interconnect | 260 TB/s (UALink) | 130 TB/s NVLink | ~500 TB/s NVLink 6 (est.) |

| Scale-Out | 43 TB/s Ethernet | InfiniBand + Ethernet | InfiniBand + Ethernet |

| Availability | H2 2026 | 2025 (shipped) | H2 2026 |

| CPU Architecture | EPYC Venice (Zen 6) | Grace ARM | Vera ARM |

A few things to note when reading that table. First, AMD's 2.9 ExaFLOPS FP4 number is dense FP4, while NVIDIA sometimes quotes sparse figures that use 2:4 sparsity to double throughput. The comparison gets complicated depending on which precision format each vendor is using for their headline number. Second, memory capacity is AMD's clear advantage: 31 TB of HBM4 versus 20.7 TB of HBM3e in the GB300 NVL72. At the scale of a single rack, that 50% memory advantage could matter a lot for inference workloads where model size determines how many GPUs you need. Third, the Vera Rubin NVL144 will beat Helios on FP4 compute if both ship on schedule - but the NVL144 hasn't shipped either, and AMD has the memory capacity lead there too.

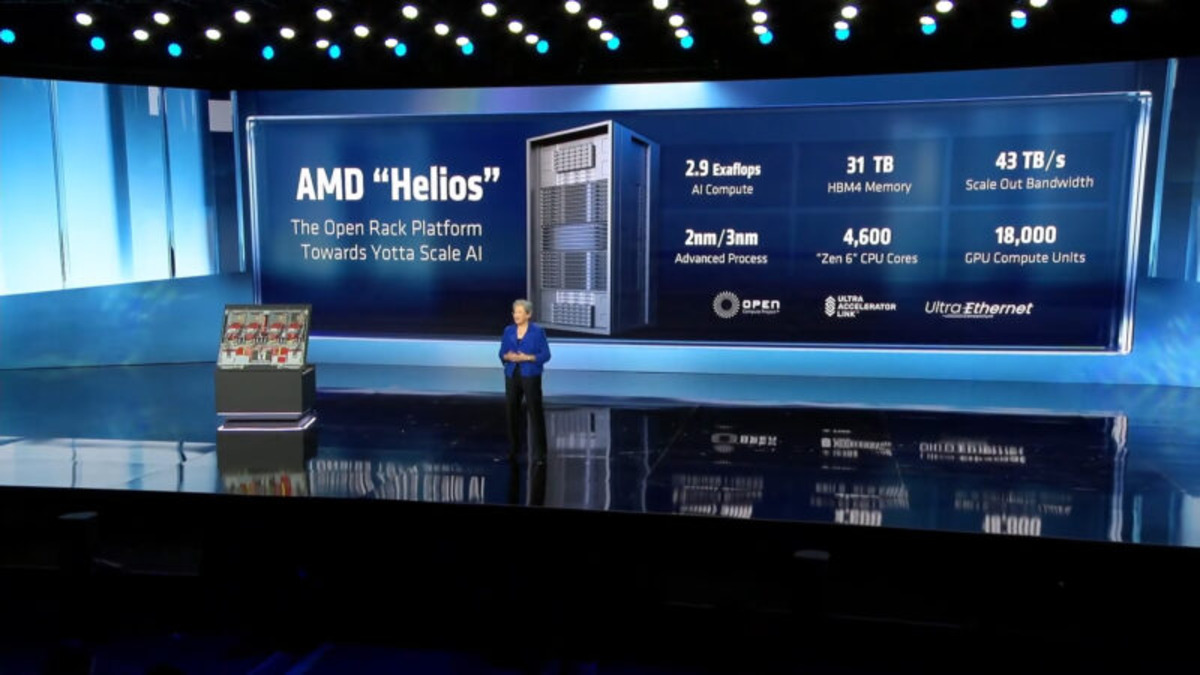

AMD's Helios specs slide from the CES 2026 announcement. The 2.9 ExaFLOPS FP4 and 31 TB HBM4 figures represent rack-level aggregates.

Source: servethehome.com

AMD's Helios specs slide from the CES 2026 announcement. The 2.9 ExaFLOPS FP4 and 31 TB HBM4 figures represent rack-level aggregates.

Source: servethehome.com

Key Capabilities

Scale-Up Interconnect: 260 TB/s via UALink

Helios uses AMD's Infinity Fabric combined with UALink for GPU-to-GPU communication within the rack, delivering 260 TB/s of scale-up bandwidth across all 72 MI455X accelerators. UALink is the open interconnect standard backed by AMD, Intel, Google, Microsoft, and Meta as an alternative to NVIDIA's proprietary NVLink. At 260 TB/s, Helios's internal fabric is twice the bisection bandwidth of the GB300 NVL72's 130 TB/s NVLink domain - at least on paper.

The important nuance: NVLink and UALink have different latency and coherency characteristics that matter for actual training and inference workloads. AMD hasn't published latency numbers for UALink in Helios. Raw bandwidth is a necessary but not sufficient condition for efficient large-scale model parallelism.

Scale-Out Networking: 43 TB/s Ethernet

Where NVIDIA racks use InfiniBand for scale-out (rack-to-rack) networking, Helios uses Ultra Ethernet at 43 TB/s aggregate across the rack. This is a deliberate ecosystem choice. Ethernet is ubiquitous, open, and cheaper to operate than InfiniBand. The tradeoff is that InfiniBand - especially NVIDIA's Quantum-X800 switches - delivers lower latency and has more mature support for RDMA-heavy AI training workloads.

AMD's bet is that Ultra Ethernet Consortium standards will close the gap by the time Helios ships at volume. That's plausible but not proven. Customers who have already invested heavily in InfiniBand infrastructure may see Helios's all-Ethernet scale-out as a mismatch with their existing networks, while customers building greenfield data centers may appreciate the operational simplicity.

Compute Tray Design

The 18-tray architecture - each tray with 4 MI455X GPUs and 1 EPYC Venice CPU - distributes CPU processing across the rack rather than concentrating it. EPYC Venice is AMD's Zen 6 generation EPYC processor, which hasn't shipped independently yet, so the Helios rack is effectively a bundled launch for both the MI455X GPU and the Venice CPU.

The OCP rack foundation means the physical design is not AMD-proprietary. Data centers that have built OCP infrastructure should be able to integrate Helios racks without custom civil engineering work. HPE's involvement as rack architecture partner adds a major systems integrator to AMD's supply chain, which matters for enterprise buyers who want a single vendor contact for the full stack.

HBM4 Memory: 31 TB at 1.4 PB/s

The memory subsystem is Helios's most defensible technical advantage. 31 TB of HBM4 across the rack - versus 20.7 TB of HBM3e in the GB300 NVL72 - means Helios can hold larger models in-rack without spilling to DRAM or NVMe. At 1.4 PB/s aggregate bandwidth, memory-bound inference workloads (which describes most LLM serving at scale) should see strong throughput if AMD's software stack can keep the GPUs fed.

HBM4 is also a first-generation technology. New memory standards normally carry a price premium and lower-than-rated yields early in production. AMD's ability to source 72 x 432 GB HBM4 allotments per rack at volume is a supply chain execution question, not just a technology question.

Pricing and Availability

AMD hasn't announced pricing for Helios. The MI455X GPU is also unpriced individually - AMD has consistently deferred pricing questions to closer to availability. The GB200 NVL72 launched at roughly $2-3 million per rack; the GB300 NVL72 is estimated at $3-4 million. A Helios rack with 72 MI455X GPUs, 18 EPYC Venice CPUs, HPE-designed rack infrastructure, and first-generation HBM4 memory won't be cheap.

Industry analysts expect per-GPU pricing for the MI455X in the $25,000-$45,000 range (based on estimates of MI400 series positioning), which would put the Helios rack at roughly $2-3 million in GPU cost alone before the rack, CPUs, networking, and cooling infrastructure. AMD will need to price competitively against the GB300 NVL72, which has the advantage of being already shipped and proven in production.

| Detail | Status |

|---|---|

| Helios Rack Price | Not announced |

| MI455X Per-GPU Price | Not announced |

| Target Availability | H2 2026 |

| First Confirmed Customer | Oracle (50,000-GPU commitment, Q3 2026) |

| OEM Partners | HPE, Celestica |

| Cloud Availability | Not confirmed |

AMD has confirmed H2 2026 availability. Oracle committed to a 50,000-GPU deployment of MI450-series chips in Helios racks, with initial availability in Q3 2026 and expansion planned for 2027. AMD has kept the MI300X and MI350X programs on roughly their announced schedules, which is encouraging. The simultaneous debut of Helios, the MI455X, and EPYC Venice still increases integration risk compared to launching a single product.

ROCm vs CUDA: The Honest Assessment

This is the part of any AMD GPU analysis where the spec numbers stop mattering and the software question takes over. ROCm - AMD's GPU software stack - is clearly better than it was three years ago. PyTorch support is solid, and MI300X deployments at Azure and Oracle have shown that ROCm can run production LLM inference workloads at scale. That's real progress.

But "better than before" isn't the same as "on par with CUDA." NVIDIA's CUDA ecosystem has a 15-year head start, and the gap isn't just in supported libraries. It's in compiler optimization depth, kernel tuning for specific model architectures, hardware-aware attention implementations (FlashAttention variants), quantization tooling, and the built up knowledge base of engineers who have spent years writing CUDA kernels. When a new model architecture - a new attention mechanism, a new MoE routing scheme - ships, it gets CUDA support first. ROCm support follows, sometimes weeks later, sometimes months.

For customers willing to do software work, Helios's hardware specs make a strong case. For customers who need to run whatever model ships next week without friction, CUDA's ecosystem advantage is real and persistent.

AMD's CDNA 4 architecture introduces new compute features - FP4 native support, new cache hierarchies, updated Infinity Fabric coherency - that all require ROCm updates to expose correctly. The MI455X's 40 PFLOPS FP4 peak is only achievable if ROCm's kernels actually create FP4 math instructions efficiently, if the compiler can schedule memory and compute units without stalls, and if higher-level frameworks (vLLM, SGLang, TRT-LLM equivalents for ROCm) have been tuned for the new architecture. None of that's guaranteed at H2 2026 launch.

AMD has been more transparent about ROCm gaps than it used to be, and there are genuine third-party investments - from Oracle, from Microsoft Research, from smaller inference shops - in making ROCm work well. But buyers evaluating Helios should budget for software engineering costs that aren't in the spec sheet.

Strengths and Weaknesses

Strengths

- 31 TB HBM4 at 1.4 PB/s is the largest per-rack memory pool of any 2026 AI rack system announced, with significant effects for large-model inference economics

- 2.9 FP4 ExaFLOPS rack-level performance is a strong number if AMD's FP4 implementation efficiency matches the peak claim

- OCP-based design with HPE as rack partner means Helios fits existing open compute data center infrastructure rather than requiring proprietary build-outs

- Ethernet scale-out avoids InfiniBand vendor lock-in and is operationally simpler for shops that run Ethernet fabrics

- EPYC Venice per-tray gives each compute tray a full Zen 6 CPU rather than sharing ARM cores across the whole rack

- Open UALink interconnect means the scale-up fabric isn't proprietary to AMD, in principle enabling future multi-vendor configurations

Weaknesses

- No benchmarks, no pricing, not shipped - everything impressive about Helios is still a spec sheet claim as of May 2026

- ROCm software gap is structural and won't be fully closed by H2 2026; running CDNA 4 efficiently requires software that hasn't been written yet

- 7,000 lb double-wide rack requires serious data center preparation; weight and footprint exceed most existing rack deployments

- HBM4 supply risk - 72 x 432 GB allocations per rack requires massive HBM4 supply at a point when HBM4 is still in early production ramp

- No TDP disclosed makes infrastructure planning (power, cooling capacity) impossible without vendor conversations

- NVLink latency advantage for NVIDIA within the rack is hard to quantify but real; UALink's latency profile at scale is unproven for large training runs

Related Coverage

- AMD Instinct MI455X - full specs and analysis of the GPU powering Helios

- NVIDIA GB300 NVL72 - the current shipping NVIDIA rack system Helios competes against

- NVIDIA Vera Rubin NVL144 - NVIDIA's next rack system, also targeting H2 2026

- AMD MI430X - AMD's HPC/FP64 CDNA 4 variant for scientific computing

Sources:

- AMD Instinct MI455X and Helios Hardware at CES 2026 - ServeTheHome

- AMD Contemplates and Engineers Yottascale AI Compute - NextPlatform

- AMD Says Helios Racks and MI400 Series GPUs On Track for 2H 2026 - NextPlatform

- Oracle First In Line for AMD Helios Racks - NextPlatform

- Unpacking AMD's Latest Datacenter CPU and GPU Announcements - The Register

- AMD CES 2026 Press Release - AMD Newsroom

- AMD and HPE Expand Collaboration for Open Rack-Scale AI - AMD IR

✓ Last verified May 15, 2026