Better Planning, Faster Benchmarks, CFO Reality Check

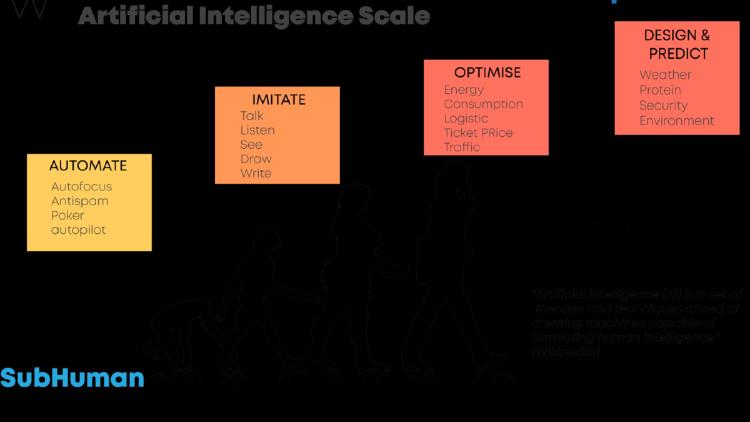

Three new arXiv papers show how to build more reliable planning agents, cut benchmark costs by 70%, and why LLMs fail at long-horizon financial decision-making.

Three new arXiv papers show how to build more reliable planning agents, cut benchmark costs by 70%, and why LLMs fail at long-horizon financial decision-making.

ByteDance ships Seed1.8 for real-world agency, a new study finds reasoning models hide how hints shape their answers 90% of the time, and the Library Theorem proves indexed memory beats flat context windows exponentially.

Three arXiv papers push AI agents further: metacognitive self-modification, milestone-based RL lifting Gemma3-12B from 6% to 43% on WebArena-Lite, and hybrid workflows cutting inference costs 19x.

Three new papers expose a gap between what AI models know and what they do - and why that gap is harder to close than anyone assumed.

Three arXiv papers rethink transformer theory, expose fatal flaws in in-context LLM memory, and introduce grey-box agent security testing.

Three new arXiv papers tackle constitutional AI rule learning, sleeper agent defense for multi-agent pipelines, and skill-evolving reinforcement learning for math reasoning.