Best AI Models for RAG - March 2026

Gemini 2.5 Flash leads RAG generation accuracy at 87% on LIT-RAGBench, while o3 tops multi-hop reasoning and Qwen3-235B is the best open-source option.

TL;DR

- Gemini 2.5 Flash leads English RAG generation at 87.0% on LIT-RAGBench (March 2026), despite costing just $0.30/1M input tokens

- o3 tops multi-hop and Japanese RAG at 88.2%; Qwen3-235B-A22B is the best open-source option at 80.6%

- Claude Sonnet 4.6 scores lowest on overall accuracy but leads all models on abstention (96.7%) - critical for production deployments that can't afford hallucinated answers

RAG is a two-part problem. The first part - retrieval - is about finding the right chunks from your knowledge base. The second part - generation - is about producing an accurate answer from those chunks without adding information that isn't there. Most benchmark comparisons conflate the two. This article separates them.

For the retrieval layer, our MTEB embedding leaderboard covers embedding model rankings in detail. This article focuses on generation quality: given retrieved context, which LLM produces the most accurate, grounded, and appropriately cautious answers?

New benchmark data from LIT-RAGBench, published in March 2026, gives the clearest picture yet of where models actually stand on RAG generation tasks.

Rankings Table

Scores come from two benchmarks: LIT-RAGBench EN (accuracy across integration, reasoning, logic, table, and abstention tasks) and RAGAS Faithfulness (how often model responses stay grounded in retrieved context, 0-1 scale). LIT-RAGBench scores take priority where available - it's a more comprehensive and recent evaluation.

| Rank | Model | Provider | Benchmark | Score | Price (Input/1M) | Verdict |

|---|---|---|---|---|---|---|

| 1 | Gemini 2.5 Flash | LIT-RAGBench EN | 87.0% | $0.30 | Best accuracy per dollar on the market | |

| 2 | o3 | OpenAI | LIT-RAGBench EN | 85.2% | $2.00 | Leads Japanese RAG + hardest multi-hop tasks |

| 3 | GPT-4.1 | OpenAI | LIT-RAGBench EN | 83.6% | $2.00 | Consistent across all RAG task categories |

| 4 | GPT-5 | OpenAI | LIT-RAGBench EN | 82.8% | $1.25 | Strong groundedness; RAG ELO leader in separate eval |

| 5 | Qwen3-235B-A22B | Alibaba | LIT-RAGBench EN | 80.6% | Free (self-host) | Top open-source; best multilingual MTEB too |

| 6 | Claude Sonnet 4.6 | Anthropic | LIT-RAGBench EN | 65.0% | $3.00 | Lowest accuracy but near-perfect abstention (96.7%) |

| 7 | Llama 3.3 70B | Meta | LIT-RAGBench EN | 61.8% | Free (self-host) | Viable open-source base, lags frontier notably |

| 8 | DeepSeek-R1 | DeepSeek | RAGAS Faithfulness | 0.89 | $0.55 (API) | 94% on multi-hop QA; best for complex reasoning RAG |

| 9 | Cohere Command R+ | Cohere | RAGAS Faithfulness | 0.87 | $2.50 | Native grounding + inline citations by design |

| 10 | Mistral Large 3 | Mistral | RAGAS Faithfulness | 0.86 | $2.00 | Solid option, especially for EU data residency needs |

| 11 | Phi-4 14B | Microsoft | RAGAS Faithfulness | 0.83 | Free (self-host) | Impressive RAG performance for a 14B model |

Detailed Analysis

Gemini 2.5 Flash - The Unexpected Leader

The story here is straightforward, and it surprised me too: Gemini 2.5 Flash, a lightweight model priced at $0.30/1M input tokens, leads the LIT-RAGBench English category at 87.0%. That's above o3 at 85.2%, above GPT-4.1 at 83.6%, and above GPT-5 at 82.8%.

LIT-RAGBench covers five distinct RAG task types: Information Integration, Reasoning, Logic, Table comprehension, and Abstention (correctly refusing when context is insufficient). Gemini 2.5 Flash scores 90.3% on the table comprehension tasks specifically, the best of any model tested. Its logic scores are also 83.3%. The reasoning category is where it gives ground, scoring 82.6% against o3's 87.0%.

This is a RAG-specific result, not a general intelligence ranking. Flash-class models often excel at structured, context-bound retrieval tasks because their training leans toward instruction following over open-ended generation. If you're running a production RAG pipeline and budget matters, Gemini 2.5 Flash represents a significant value finding.

Google also released Gemini Embedding 2 on March 10, 2026, the first natively multimodal embedding model. It maps text, images, video, audio, and documents into a unified semantic space. Early partners report latency reductions up to 70% from removing separate transcription pipelines. Running Gemini 2.5 Flash with Gemini Embedding 2 gives you an integrated Google-native RAG stack for under $0.40/1M tokens combined.

o3 - Best for Complex and Multilingual RAG

OpenAI's o3 leads the LIT-RAGBench Japanese category at 88.2% - ahead of every other model including Gemini 2.5 Flash on that split. For multilingual RAG deployments serving non-English user bases, o3 is the current benchmark leader.

On Reasoning and Logic tasks specifically, o3 scores 87.0% and 90.0% in English, both top marks. These are exactly the RAG scenarios that trip up other models: questions requiring integration of information from two or more retrieved documents, or questions where the retrieved context contains conflicting signals. O3's chain-of-thought reasoning architecture handles these better than standard instruction-tuned models.

The tradeoff is cost. At $2.00/$8.00 per 1M input/output tokens, o3 costs nearly 7x more than Gemini 2.5 Flash. For high-volume production workloads, that pricing gap only makes sense if your queries are truly multi-hop or complex. For standard Q&A over a document corpus, Flash is the better call.

Separately, a RAG-specific ELO evaluation from agentset.ai showed GPT-5.1 leading with an ELO of 1,711 and GPT-5.2 at 1,588. That evaluation doesn't overlap directly with LIT-RAGBench, and methodology differences mean these numbers can't be combined into a single ranking. I'm reporting both because they capture different things.

Claude Sonnet 4.6 - Wrong for the Headline, Right for the Job

Claude Sonnet 4.6 scores 65.0% on LIT-RAGBench English, the lowest of all API models tested. On that ranking alone, it looks like a poor choice for RAG. That reading misses something important.

Claude Sonnet 4.6 scores 96.7% on Abstention tasks - correctly refusing to answer when the retrieved context doesn't support an answer. Every other model in the benchmark scores below 94% on this category. In production RAG, false answers are worse than no answers. A system that confidently produces wrong information from context breaks user trust in ways that "I don't have enough information to answer that" never does.

If your application has low tolerance for hallucination - medical, legal, financial domains - Claude's abstention behavior is worth paying for, even at $3.00/$15.00 per 1M tokens. The 65% overall accuracy isn't the whole picture. It reflects in part that Claude refuses more aggressively, which the benchmark counts against it.

This is also where reading long-context retrieval scores with RAG generation scores matters: Claude Opus 4.6 leads the MRCR v2 8-needle benchmark at 76% accuracy at 1M tokens. For RAG pipelines that need to pull facts from very large documents, Opus is still worth evaluating with Flash.

Qwen3-235B-A22B - Open-Source RAG Done Right

Alibaba's Qwen3-235B-A22B scores 80.6% on LIT-RAGBench English, making it the only open-source model competitive with frontier API models. The Reasoning category score is 78.3% - below the API model leaders, but ahead of Llama 3.3 70B by a significant margin.

The architecture is a mixture-of-experts design with 235B total parameters but roughly 22B active per forward pass. That keeps inference costs manageable if you're self-hosting. It also leads the MTEB Multilingual benchmark at 70.58, meaning you can use Qwen3-235B for generation while also deploying Qwen3's embedding models for the retrieval layer.

RAGAS faithfulness testing from Prem AI shows Qwen3-30B-A3B (the smaller sibling) at 0.91 - the highest faithfulness score of any open-source model in their evaluation. The 235B variant scores similarly on LIT-RAGBench reasoning tasks. If you need a self-hosted RAG stack that doesn't call external APIs, Qwen3-235B is the clearest choice right now.

Cohere Command R+ - Purpose-Built for Citations

Cohere's Command R+ isn't the top performer on LIT-RAGBench (it wasn't included in that paper's evaluation), but it occupies a distinct niche. The model was designed specifically for RAG - it produces inline citations pointing to source document chunks by default. That behavior is something most models have to be prompted into, and results are inconsistent. For Command R+, it's the default output format.

RAGAS faithfulness: 0.87, on par with Llama 3.3 70B. The Cohere stack includes Embed v4 (second on BEIR retrieval benchmarks at 53.7% NDCG@10) and Rerank 3.5 ($2.00/1,000 searches) to complete the pipeline. For enterprise teams that need source attribution in every response and don't want to build citation logic on top of a general-purpose model, this is the most coherent end-to-end option.

Cohere released Command A 03-2025 as the successor to Command R+, claiming 150% throughput on the same hardware and improved reasoning. It wasn't included in the RAGAS or LIT-RAGBench evaluations I found, so I can't place it in the ranking table with verified numbers. Worth assessing if you're going all-in on the Cohere stack.

Gemini Embedding 2, released March 10, 2026, maps text, images, video, audio, and documents into a single vector space - enabling multimodal RAG pipelines with a unified index.

Source: blog.google

Gemini Embedding 2, released March 10, 2026, maps text, images, video, audio, and documents into a single vector space - enabling multimodal RAG pipelines with a unified index.

Source: blog.google

Methodology

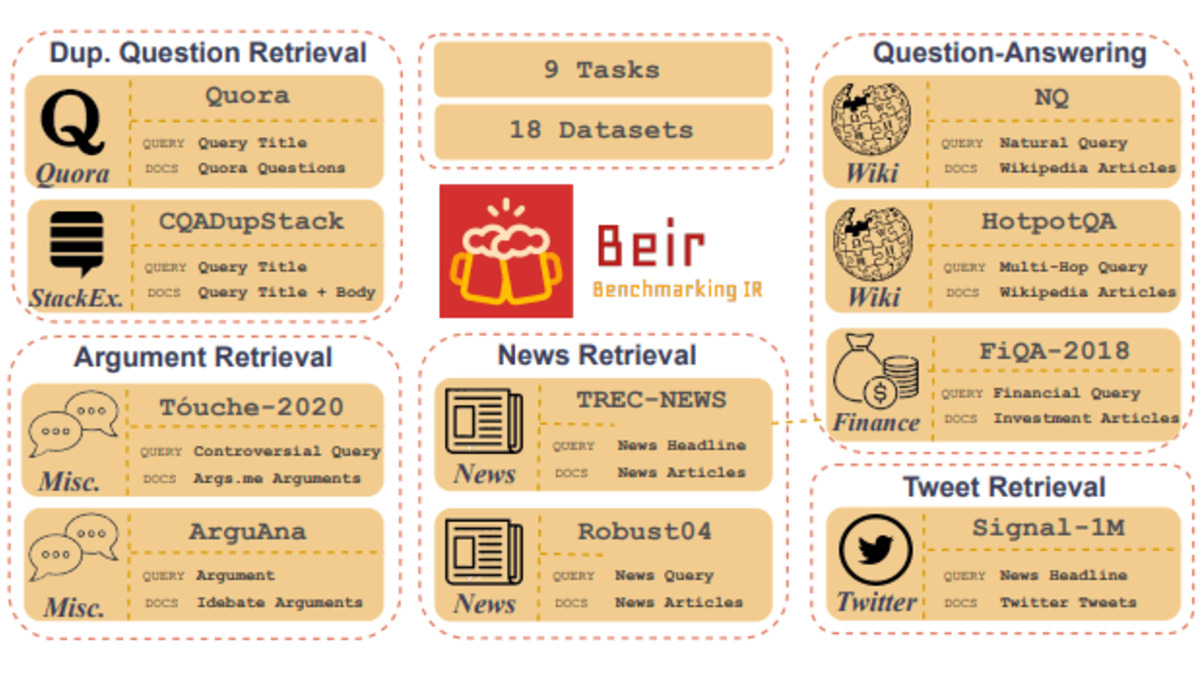

The primary benchmark in this article is LIT-RAGBench (arxiv.org/abs/2603.06198), published March 2026. It covers 5 RAG task categories: Information Integration, Reasoning, Logic, Table comprehension, and Abstention. Models are assessed on their ability to answer questions from provided context, with accuracy measured against gold-standard answers. The paper tested both Japanese and English variants. All scores cited here are from the paper directly.

RAGAS Faithfulness scores for models not in LIT-RAGBench come from Prem AI's evaluation (blog.premai.io). Methodology isn't fully documented - dataset composition, chunk sizes, and retrieval settings aren't specified. Treat those scores as directional rankings, not hard numbers. I've flagged which metric applies to each row in the table.

The RGB Benchmark (arxiv.org/abs/2309.01431) is frequently cited in RAG literature but covers older models (ChatGPT, Vicuna, Qwen, BELLE from 2023). Frontier 2026 models haven't been methodically assessed on RGB. A March 2026 GraphRAG paper did apply it to GPT-3.5 and GPT-4o-mini and found that knowledge graph augmentation improved counterfactual robustness from 7% to 94.94% - relevant to RAG system design, but not a model comparison.

Benchmark contamination is a real concern. LIT-RAGBench uses a bilingual dataset not widely available before publication, which reduces contamination risk. RAGAS evaluations depend heavily on dataset composition, and different labs running RAGAS against different corpora produce incomparable scores.

No model in the LIT-RAGBench evaluation exceeded 90% overall accuracy. The benchmark represents genuine challenge for current models.

Retrieval Layer - Don't Ignore the Embedding Model

The generation model gets most of the attention, but a strong generator can't compensate for bad retrieval. If the wrong chunks reach the model, the best answer it can give is still based on the wrong information.

Embedding model rankings show Voyage-Large-2 leading BEIR 2.0 at 54.8% NDCG@10, followed by Cohere Embed v4 (53.7%) and BGE-Large-EN (52.3%). OpenAI's text-embedding-3-large sits at 51.9%. For multilingual RAG, Qwen3-Embedding-8B leads MTEB Multilingual at 70.58.

Gemini Embedding 2 (released March 10, 2026) is the most significant embedding development in the past year. It's the first model to embed text, images, video, audio, and documents in a unified space. Real-world tests show Everlaw (legal documents, 1.4M corpus) measuring 87% recall vs. 84% for Voyage and 73% for OpenAI's embedding model. Those numbers are from Google's developer blog, so treat them with appropriate skepticism, but the direction is consistent with the model architecture.

BM25, the lexical search baseline, scores 41.2% on BEIR - more than 13 percentage points below the top neural embedding models. If you're still using BM25 as your primary retrieval layer, you're leaving significant accuracy on the table.

A RAG pipeline splits into two distinct stages: the retrieval layer (embedding model + vector store) and the generation layer (LLM). Weak retrieval limits what even the best generator can produce.

Source: evidentlyai.com

A RAG pipeline splits into two distinct stages: the retrieval layer (embedding model + vector store) and the generation layer (LLM). Weak retrieval limits what even the best generator can produce.

Source: evidentlyai.com

Historical Progression

Mid-2023 - RGB Benchmark published, establishing the first systematic RAG evaluation framework. GPT-3.5 scores 55% on information integration tasks (English). The benchmark reveals that even frontier models fail to identify counterfactual errors in retrieved context 93% of the time.

2024 - RAGAS framework gains wide adoption as an evaluation toolkit. RAGTruth (18,000 examples) and RAGBench (100,000 examples) publish more detailed hallucination taxonomies. The community splits between teams using retrieval-focused embeddings (BEIR, MTEB) and generation-focused evaluation (RAGAS, RGB).

Early 2025 - Cohere Command R and Command R+ release with native grounded generation and citations. First models designed specifically for RAG rather than adapted from general-purpose base models.

Late 2025 - Context window expansion across major providers reduces the "retrieval vs. long-context" debate. Teams start asking whether long context can replace RAG completely. FRAMES and RULER benchmarks emerge to evaluate exactly this question. Short answer from the data: long context doesn't replace RAG for most production use cases - retrieval remains more cost-efficient and often more accurate.

March 2026 - LIT-RAGBench publishes comprehensive five-category evaluation. Gemini 2.5 Flash leads English RAG accuracy. Gemini Embedding 2 launches native multimodal embedding, enabling unified RAG pipelines across text, images, video, and audio. First significant benchmark data placing open-source (Qwen3-235B) above several API models on generation tasks.

FAQ

What's the best model for RAG right now?

Gemini 2.5 Flash leads LIT-RAGBench English at 87.0% and costs $0.30/1M input tokens. For multi-hop or complex RAG, o3 at 85.2% handles reasoning better. Qwen3-235B-A22B at 80.6% is the top open-source option.

Is Claude good for RAG?

Claude Sonnet 4.6 scores 65.0% on RAG accuracy benchmarks - below Gemini Flash and o3 - but leads all models on abstention at 96.7%. If your application can't afford hallucinated answers, Claude's refusal behavior may outweigh the lower accuracy score.

What's the cheapest model that's still good at RAG?

Gemini 2.5 Flash at $0.30/1M input tokens leads the benchmark while being the second-cheapest paid option. Qwen3-235B-A22B is free to self-host and scores 80.6%. For very high volume, DeepSeek-R1's API pricing starts around $0.55/1M input.

Is open-source competitive for RAG?

Yes, for the first time at the generation layer. Qwen3-235B-A22B scores 80.6% on LIT-RAGBench, beating Claude Sonnet 4.6 (65.0%) and Llama 3.3 70B (61.8%). For multi-hop tasks, DeepSeek-R1 reports 94% accuracy. Self-hosted RAG with open-weight models is now viable for teams with the infrastructure.

Should I use a specialized RAG model like Cohere Command R+?

If source attribution and inline citations are requirements, yes. Command R+ produces citations by default rather than requiring prompt engineering. For pure accuracy, Gemini 2.5 Flash or o3 score higher. Cohere's value is in the integrated stack (Embed + Rerank + Command) and native citation behavior.

How often do these rankings change?

Fast. LIT-RAGBench published in March 2026 shows Gemini 2.5 Flash leading, but Gemini Embedding 2 just shipped and hasn't been fully evaluated in combined RAG pipelines yet. New model releases from OpenAI, Anthropic, and Google normally reshuffle the top-5 within a quarter. Check lastVerified at the top of this page.

Sources:

- LIT-RAGBench: Benchmarking Generator Capabilities of LLMs in RAG

- Best Open-Source LLMs for RAG in 2026: 10 Models Ranked

- 7 RAG Benchmarks Explained

- Gemini Embedding 2: Our First Natively Multimodal Embedding Model

- Gemini Embedding: Powering RAG and Context Engineering

- Benchmarking LLM Faithfulness in RAG with Evolving Leaderboards

- RGB: Benchmarking Large Language Models in RAG

- GPT-5.2 RAG Performance: We Tested It

- BEIR Benchmark 2.0 Update

- RAGBench: Explainable Benchmark for RAG Systems

- Cohere RAG Documentation

- LLM API Pricing 2026

✓ Last verified March 13, 2026